Which AI Model Actually Writes the Best Code?

That question gets harder to answer every few months, and 2026 has made it harder than ever. Six frontier models now score within two percentage points of each other on the industry's toughest coding benchmark. New models are shipping every few weeks. The difference between picking the right model and the wrong one is now measured in real developer hours and real money.

This guide skips the fluff and gives you what you actually need: a clear breakdown of the best AI coding models right now, what each one is genuinely good at, and a straightforward framework for choosing the right one for your work.

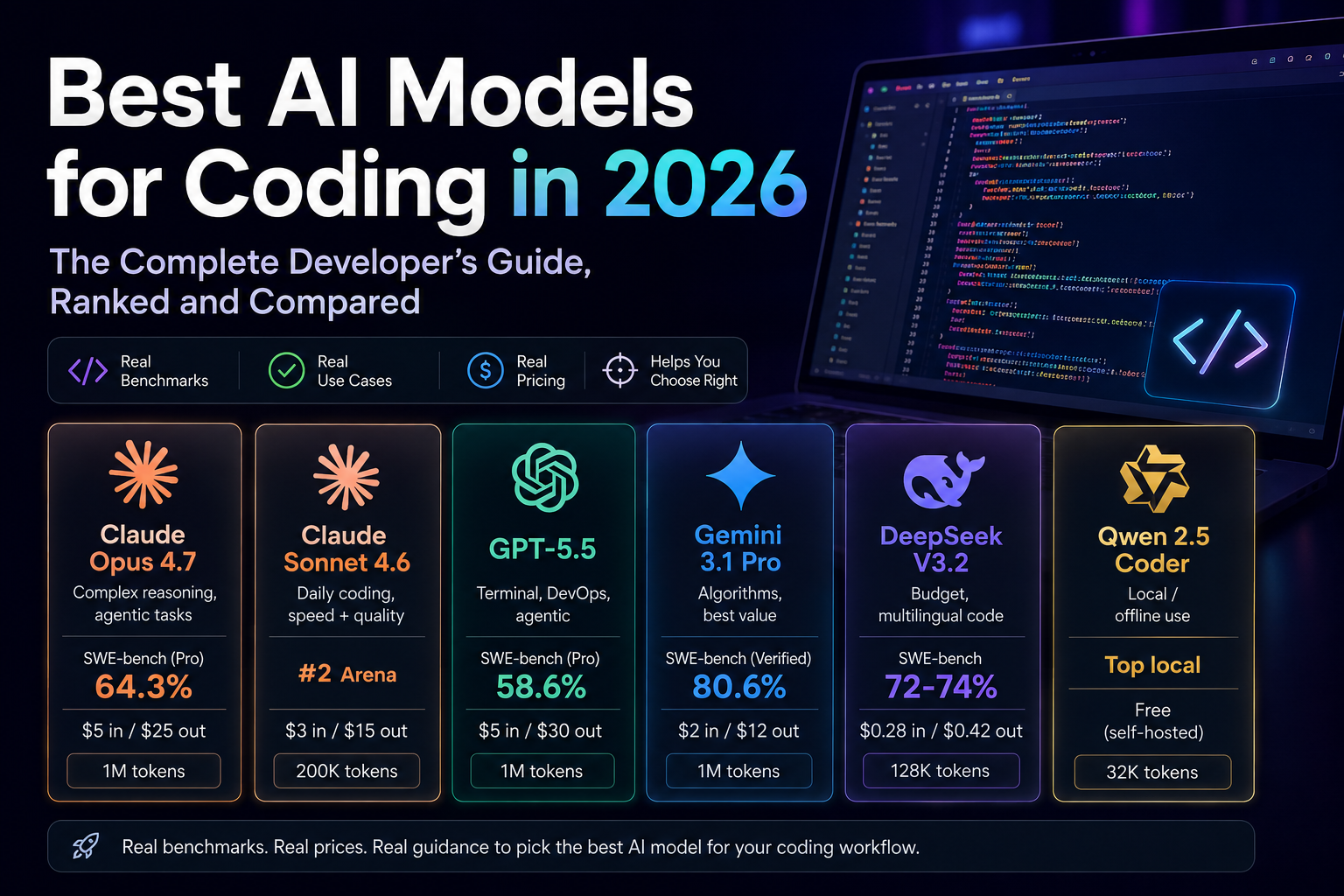

Top AI Coding Models at a Glance

Here is how the leading models stack up across the metrics that matter most for real development work.

| Model | Best For | SWE-bench | Pricing (1M tokens) | Context |

|---|---|---|---|---|

| Claude Opus 4.7 | Complex reasoning, agentic tasks | 64.3% Pro | $5 in / $25 out | 1M tokens |

| Claude Sonnet 4.6 | Daily coding, speed + quality | #2 Arena | $3 in / $15 out | 200K tokens |

| GPT-5.5 | Terminal, DevOps, agentic | 58.6% Pro | $5 in / $30 out | 1M tokens |

| Gemini 3.1 Pro | Algorithms, best value | 80.6% Verified | $2 in / $12 out | 1M tokens |

| DeepSeek V3.2 | Budget, multilingual code | 72-74% | $0.28 in / $0.42 out | 128K tokens |

| Qwen 2.5 Coder | Local / offline use | Top local | Free (self-hosted) | 32K tokens |

Pricing reflects standard API rates as of May 2026. SWE-bench Verified measures real GitHub issue resolution, which is the most reliable proxy for real-world software engineering performance.

1. Claude Opus 4.7 - Best for Complex, High-Stakes Coding

Opus 4.7 is Anthropic's flagship model, and it earns that title. It leads SWE-bench Pro at 64.3% and tops computer-use benchmarks with an OSWorld score of 78.0%. More importantly, it handles the kind of work where a mistake is genuinely costly: legacy refactors, security-sensitive architecture, and multi-file agentic tasks that require the model to think several steps ahead.

What makes Opus different is not raw speed but reasoning depth. Give it a messy codebase and an ambiguous instruction, and it will reason through the implications before writing a single line. For the problems that actually keep senior developers up at night, Opus is the right tool.

Best for:

- Complex refactoring and architecture work across large codebases

- Agentic workflows that require multi-step planning and autonomous execution

- Security reviews and high-stakes production changes

- Tasks where getting it right matters more than getting it fast

The trade-off is cost. At $5 per million input tokens and $25 per million output tokens, Opus is expensive for routine work. Most developers use it selectively, pulling it in for the hard problems while relying on Sonnet for everything else.

2. Claude Sonnet 4.6 - Best Daily Driver for Most Developers

If there is one model you should have open all day while coding, it is Sonnet 4.6. It holds the second-highest score in live coding arena rankings and it is the model powering Cursor and Windsurf, which are the two most popular AI IDEs that professional developers are actually paying for and using daily. That is the most honest endorsement any model can get.

Sonnet is fast, reliable, and handles the everyday work that makes up 90% of a developer's day: writing components, generating API routes, creating tests, reviewing pull requests, and explaining unfamiliar code. It is not as deep a thinker as Opus, but for most tasks you will not notice the difference, and you will spend a fraction of the cost.

Best for:

- Day-to-day feature development including components, endpoints, tests, and code reviews

- Developers using Cursor, Windsurf, or any Claude-powered AI IDE

- Freelancers and agencies where speed and cost directly affect margins

- Anyone who needs a reliable, high-quality model they can leave running all day

3. GPT-5.5 - Best for Terminal and DevOps Workflows

GPT-5.5 is OpenAI's strongest public coding model right now, and it has a clear specialty: terminal-heavy work. It scores 82.7% on Terminal-Bench 2.0, which is a meaningful lead over competitors, and 58.6% on SWE-bench Pro. If your daily work involves shell scripting, Terraform configs, Kubernetes manifests, or CI/CD pipeline debugging, GPT-5.5 has a genuine advantage.

It also ships with native computer-use capabilities and a 1 million token context window in Codex mode, making it capable of reading and reasoning over enormous codebases in a single pass. One important note: GPT-5.5 rewards specific, well-structured prompts. It performs noticeably better when you give it clear context compared to leaving things vague, unlike Opus which handles ambiguity more gracefully.

Best for:

- DevOps engineers, SREs, and infrastructure-as-code work

- Shell scripting, system administration, and terminal automation

- Teams already using the OpenAI and Microsoft ecosystem

- Large-context tasks requiring reasoning over million-token codebases

4. Gemini 3.1 Pro - Best Value at Frontier Performance

Gemini 3.1 Pro, released in February 2026, is the most disruptive model in this list. Not because it tops every benchmark, but because of what it costs. At $2 per million input tokens and $12 per million output tokens, it matches Claude Opus 4.6's SWE-bench Verified score of 80.6% at less than half the price. For teams running hundreds of coding API calls daily, that gap is very significant.

It also leads LiveCodeBench Pro with an Elo score of 2,887, making it the strongest available model for algorithmic reasoning and competitive programming. If your work is algorithm-heavy, Gemini 3.1 Pro is the best value option on the market right now.

The honest caveat: developer sentiment is mixed. First impressions tend to be positive, but there are consistent reports of occasional quality regressions and billing complexity on certain API surfaces. It performs best with structured, detailed prompts.

Best for:

- Algorithmic problem solving, data structures, and competitive programming

- Startups and teams with high API volume and real budget constraints

- Test-driven development and writing comprehensive test coverage

- Teams who want frontier-level quality without paying frontier-level prices

5. DeepSeek V3.2 – Best Affordable Option for API-Heavy Teams

DeepSeek V3.2 offers surprisingly competitive pricing for what it delivers. Input tokens cost $0.28 per million and output tokens $0.42 per million, making it a fraction of the cost compared to premium models like Claude Opus. And it is not a toy model. It scores 72 to 74% on SWE-bench Verified, which is sufficient for a wide range of production coding tasks. Its 83.3% on LiveCodeBench and strong multilingual performance make it well suited for polyglot teams working across Python, Go, Rust, TypeScript, and Java.

The main consideration for enterprise teams is data governance. DeepSeek is a China-based company, so teams with strict data residency or compliance requirements should evaluate it carefully before adopting it in production workflows.

Best for:

- High-volume API workflows where cost is the primary constraint

- Multilingual codebases and polyglot development environments

- Boilerplate generation and repetitive coding tasks at scale

- Using as a supplementary model alongside a premium option for lighter tasks

6. Local Models - Best for Privacy and Zero API Costs

Not everyone wants to send code to a cloud API. Whether it is intellectual property concerns, internet connectivity, or simply not wanting a monthly subscription, local models have become a legitimate option in 2026.

Qwen 2.5 Coder 32B is the consensus best local coding model. Built specifically for code, it handles multi-file refactoring, multiple languages, and complex logic better than any other locally-run model. The hardware requirement is 18 GB of RAM, which puts it in mid-to-high-end laptop territory. If you have less hardware available, Gemma 4 27B fits on a machine with 32 GB of RAM and delivers around 80% of Qwen's quality at a lower hardware cost.

The easiest setup is to install Ollama, pull your model, and add the Continue.dev extension to VS Code. You get GitHub Copilot-quality autocomplete and chat running entirely on your machine, with no internet required and no per-token fees.

Best for:

- Developers with IP concerns or working on proprietary codebases

- Businesses that cannot send sensitive data to third-party cloud servers due to compliance or industry regulations

- Anyone who wants no API or additional costs after the initial hardware investment

- Offline environments such as air-gapped systems or low-connectivity areas

How to Choose the Right Model for Your Work

Here is the honest answer most guides avoid: the best AI coding model is not one single model. The developers getting the most out of AI in 2026 treat models like a toolbox. They use the right AI model for the right job.

A practical starting point for most developers is to use Claude Sonnet 4.6 as your daily driver for the bulk of your work. Pull in Opus 4.7 or GPT-5.5 when you hit something genuinely complex. If budget is tight, Gemini 3.1 Pro gives you frontier quality at a lower price. If you have privacy requirements, go local with Qwen 2.5 Coder.

Whichever model you choose, give it real context. The quality of your prompt matters more than the difference between most top-tier models. A well-written prompt with clear context, your tech stack, and specific constraints will consistently outperform a vague request sent to the most expensive model available.

Final Thoughts

AI coding in 2026 is not about finding one perfect model and sticking with it forever. The landscape is shifting fast enough that the best model today may not be the best model in three months. What matters more is building the habit of using these tools effectively, knowing when to reach for them, how to prompt them well, and how to review their output critically before it ships.

The models covered in this guide represent the best of what is available right now. Pick one that fits how you work and what you can afford, get comfortable with it, and switch things up as better options come along. The developers who will get the most value from AI are not the ones who picked the perfect model. They are the ones who learned to use these tools as a natural part of how they work.

Key Takeaways

- Claude Opus 4.7 is best for complex reasoning, agentic tasks, and high-stakes production work.

- Claude Sonnet 4.6 is the best daily driver and powers Cursor and Windsurf IDEs.

- GPT-5.5 is best for terminal-heavy DevOps workflows and large-context agentic coding.

- Gemini 3.1 Pro matches frontier benchmarks at roughly half the cost of Claude or GPT-5.5.

- DeepSeek V3.2 is the best budget API option for high-volume and multilingual teams.

- Qwen 2.5 Coder 32B is the best local model with no API costs and full privacy.

- The smartest strategy in 2026 is using multiple models as a build toolbox, not one model for everything.